I realised I had a problem when I looked at my calendar: Three hours blocked for code reviews. Again. I’m a lead engineer, but somewhere along the way, I stopped being a code writer and became a full-time code reviewer.

Sound familiar? Developers waiting on my feedback. PRs piling up. And when I finally got to review, I was catching the same issues over and over—missing type definitions, repeated code, no error handling, tests that didn’t actually test anything.

Documentation, checklists, and review templates alone didn’t deliver consistent outcomes. Repetitive, low-value checks tend to degrade under time pressure, so automating those tasks ensures the same standards are applied every time.

So I built something for myself: A system where the process reviews itself, and I only step in for the decisions that actually need a human.

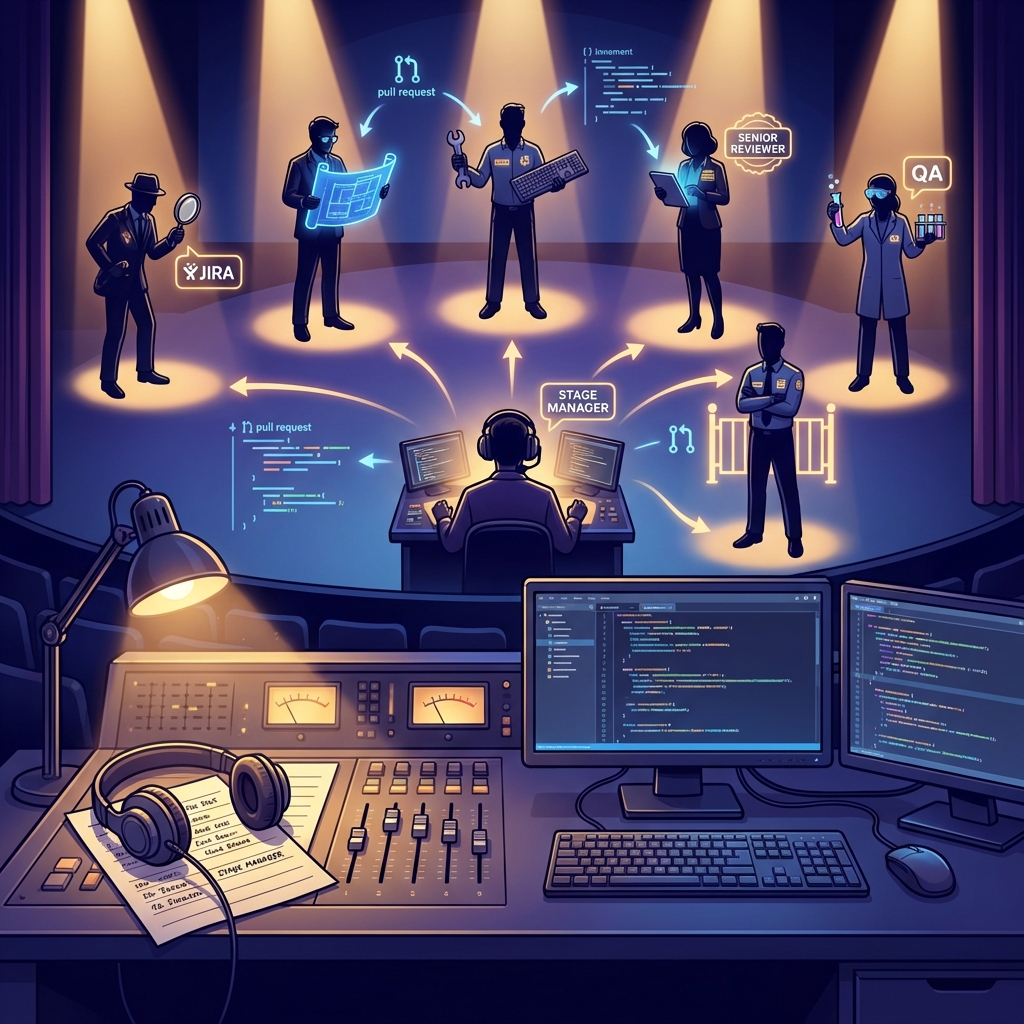

The Theatre Metaphor That Changed Everything

I work in tech for a theatre company—building tools and websites. One day, watching our stage manager coordinate a show, it clicked: they don’t perform. They call the cues. They coordinate. The show runs smoothly not because everyone remembers everything, but because someone ensures each department does their job at the right time.

What if code review worked the same way?

My process makes me the decision-maker, not the checklist runner. Instead of manually verifying each task, the workflow guarantees those checks are automated and surfaced only when they need my attention:

- Jira Analyst extracts clear acceptance criteria and test cases from every ticket

- Solution Architect proposes three implementation options that fit our codebase

- Engineer implements the chosen approach with strict types, error handling, and tests

- Senior Engineer gates maintainability, DRY, and performance considerations

- QA verifies every AC, edge case, and test coverage before sign-off

- Final Reviewer blocks TypeScript/ESLint errors, debug code, and missing types

- Stage Manager coordinates handoffs and only surfaces unclear requirements or architectural decisions for my review

Result: I review architecture and business logic — not repetitive, low-value checks.

What if specialised AI agents handled the repetitive checks, and I only reviewed the architectural decisions and business logic?

That’s what I built. And it gave me back 15 hours a week.

The Business Impact: Why This Matters

Before building this system

- I spent 15+ hours/week buried in code reviews, leaving little time to actually write code

- ~30% of PRs required significant rework, so my calendar was dominated by repetitive fixes

- Constant context switching drained my focus and increased stress

- Everything waited on me—no time for design, mentorship, or strategic work

Three months later — personal impact

- Review time dropped to 4–6 hours/week (about a 60% reduction), giving me ~10 extra hours/week to do meaningful work

- Rework rate fell to <10% — I stopped re-reading the same mistakes and started moving projects forward

- I spend my review time on architecture and business logic, not syntax or linting

- Fewer interruptions = longer stretches of deep work, better decisions, and faster feature delivery

- More time for mentoring, designing systems, and writing the code I enjoy

- Reduced stress, fewer late-evening fixes, and a measurable improvement in work–life balance

- Career impact: increased influence on product direction and higher job satisfaction from doing higher-leverage work

- The team moves faster without me being the bottleneck — I add strategic value instead of acting as a human linter

The key insight: I don’t need to review everything. I need to review the things that matter.

Humans are bad at repetitive checks. AI is excellent at it. Let the AI catch missing types and DRY violations. I’ll focus on whether we’re building the right thing the right way.

Meet Your Development Team (The AI Edition)

Our workflow uses seven specialised agents, each with a single, focused job. Think of them as your always-available team of specialists:

1. Stage Manager – The Coordinator

Role: Calls the cues, manages the workflow from ticket to PR

This is your entry point. You tell the Stage Manager “work on TICKET-123” and they orchestrate the entire flow, handing off to each specialist in sequence and handling any feedback loops.

Business value: Complete visibility into where every ticket is in the process. No more “is this in code review or QA?” guessing games.

2. Jira Analyst – The Requirements Detective

Role: Extracts clear requirements and test cases from tickets

Takes your (often vague) Jira ticket and produces:

- Crystal-clear acceptance criteria

- Comprehensive test cases in Given/When/Then format

- Flags anything that needs clarification

Example output:

AC1: When user submits invalid email, display error message

AC2: When user submits valid email, proceed to next step

AC3: [NEEDS CLARIFICATION] Should we support plus addressing (email+tag@domain.com)?

Test Cases:

TC-001: Invalid email shows error

TC-002: Valid email proceeds

TC-003: Empty field shows validation errorBusiness value: Catches ambiguous requirements before coding starts. Prevents the “that’s not what I meant” conversations after implementation.

3. Solution Architect – The Options Provider

Role: Proposes three implementation approaches (Simple, Balanced, Comprehensive)

Never just one solution. Always three options with trade-offs:

- Option 1: Quick and dirty (good for experiments)

- Option 2: Balanced approach (recommended for most work)

- Option 3: Future-proof (good for core features)

Business value: Empowers your team to make informed trade-offs. Junior developers learn to think architecturally. You avoid both over-engineering and technical debt.

4. Engineer – The Builder

Role: Implements the chosen solution following best practices

Writes clean TypeScript/JavaScript code with:

- Proper type safety

- Error handling

- Following your existing patterns

- Comprehensive tests

Business value: Consistent code quality regardless of who’s implementing. New hires follow established patterns from day one.

5. Senior Engineer – The Quality Gatekeeper

Role: Critical code review focused on maintainability

Reviews for:

- DRY violations (repeated code)

- Type safety

- Error handling

- Clean code principles

- Performance issues

Categorises issues by severity (Critical/Important/Suggestions) so you know what’s blocking vs. nice-to-have.

Business value: Your senior engineers (like me) review high-level decisions, not syntax. The AI catches the tedious stuff.

6. QA Engineer – The Verification Specialist

Role: Verifies functionality and test coverage

Runs all tests, verifies each acceptance criterion is met, and checks for edge cases. Won’t approve unless:

- All tests pass

- Every AC has test coverage

- Edge cases are handled

Business value: Catches gaps before production. No more “we should have tested that” postmortems.

7. Final Reviewer – The Zero-Tolerance Checker

Role: Production readiness gate—zero errors allowed

This is your final quality gate. Runs error checks and blocks the PR if it finds:

- TypeScript errors

- ESLint violations

- Missing types

- Debug code (console.logs, TODOs)

Business value: Nothing reaches production with basic errors. Period.

The Workflow: How It All Comes Together

Here’s what happens when you start a ticket:

You: "@stage-manager work on TICKET-456"

Stage Manager: "Starting workflow..."

┌─────────────────────────────────────────────┐

│ 1. Jira Analyst │

│ ↓ Extracts requirements + test cases │

├─────────────────────────────────────────────┤

│ 2. Solution Architect │

│ ↓ Proposes 3 implementation options │

├─────────────────────────────────────────────┤

│ 3. You choose: "Let's do Option 2" │

│ ↓ │

├─────────────────────────────────────────────┤

│ 4. Engineer │

│ ↓ Implements the solution │

├─────────────────────────────────────────────┤

│ 5. Senior Engineer │

│ ↓ Code review (finds 2 minor issues) │

├─────────────────────────────────────────────┤

│ 6. Engineer (revision) │

│ ↓ Fixes issues │

├─────────────────────────────────────────────┤

│ 7. Senior Engineer │

│ ↓ Approved │

├─────────────────────────────────────────────┤

│ 8. QA Engineer │

│ ↓ All tests pass, ACs met │

├─────────────────────────────────────────────┤

│ 9. Final Reviewer │

│ ↓ No errors detected │

├─────────────────────────────────────────────┤

│ 10. Stage Manager creates PR │

│ ✅ Ready for merge │

└─────────────────────────────────────────────┘Key advantage: Feedback loops happen early. If requirements are unclear, you find out before code is written. If the approach is wrong, you course-correct before implementation. If there are bugs, they’re caught before human review.

Implementation Guide: Build This Yourself

This runs entirely in VS Code using GitHub Copilot’s multi-agent system. Here’s how to set it up:

Prerequisites

- VS Code with GitHub Copilot subscription

- GitHub repository

- (Optional) Jira account with Atlassian MCP integration

Step 1: Create Your Agent Files

In VS Code, create a .prompts directory or configure your user-level prompts folder. You’ll create seven .agent.md files:

File structure:

.prompts/

├── stage-manager.agent.md

├── jira-analyst.agent.md

├── solution-architect.agent.md

├── engineer.agent.md

├── senior-engineer.agent.md

├── qa-engineer.agent.md

└── final-reviewer.agent.mdStep 2: Configure the Stage Manager

Create stage-manager.agent.md:

---

description: 'Coordinates the delivery workflow from ticket to PR'

tools: ['vscode', 'read', 'search', 'github/*', 'atlassian/*']

handoffs:

- agent: jira-analyst

label: "📋 Start: Analyze Ticket"

- agent: solution-architect

label: "🏗️ Propose Solutions"

- agent: engineer

label: "👨💻 Implementation"

- agent: senior-engineer

label: "👀 Code Review"

- agent: qa-engineer

label: "🧪 QA Testing"

- agent: final-reviewer

label: "✅ Final Review"

---

# Stage Manager

Coordinate the entire workflow. Hand off to each agent in sequence.

## Your Role

1. Take a ticket number from the user

2. Hand to Jira Analyst for requirements

3. Hand to Solution Architect for options

4. Hand to Engineer for implementation

5. Hand to Senior Engineer for review

6. If changes needed, loop back to Engineer

7. Hand to QA for testing

8. Hand to Final Reviewer for production check

9. If approved, create the PR

10. If blocked, send back to Engineer with feedback

Track progress and keep user informed at each step.Step 3: Configure the Jira Analyst

Create jira-analyst.agent.md:

---

description: 'Extract requirements and test cases from tickets'

tools: ['atlassian/*', 'search', 'read']

handoffs:

- agent: solution-architect

label: "🏗️ Propose Solutions"

---

# Jira Analyst

Extract clear requirements from Jira tickets.

## Your Task

1. Fetch the ticket details

2. Extract acceptance criteria (AC1, AC2...)

3. Write test cases in Given/When/Then format

4. Flag anything unclear with [NEEDS CLARIFICATION]

## Output Format

```

# TICKET-XXX: [Brief description]

## Acceptance Criteria

AC1: Given [context], When [action], Then [result]

AC2: [Another requirement]

## Test Cases

TC-001: [Test name]

Given: [initial state]

When: [user action]

Then: [expected outcome]

```

Keep it clear and testable.Step 4: Configure the Solution Architect

Create solution-architect.agent.md:

---

description: 'Propose 3 implementation approaches'

tools: ['vscode', 'read', 'search', 'github/*']

handoffs:

- agent: engineer

label: "👨💻 Implement Solution"

---

# Solution Architect

Propose three ways to solve the ticket.

## Your Task

Search the codebase for existing patterns, then propose:

**Option 1: Simple**

- Fastest to implement

- May have limitations

- Good for: MVPs, experiments

**Option 2: Balanced** ⭐

- Clean, follows patterns

- Reasonable effort

- Good for: Most tickets

**Option 3: Comprehensive**

- Future-proof

- More upfront work

- Good for: Core features

Include code examples and recommend one option.Step 5: Configure the Engineer

Create engineer.agent.md:

---

description: 'Implement the solution with clean code'

tools: ['vscode', 'read', 'search', 'edit', 'create', 'run']

handoffs:

- agent: senior-engineer

label: "👀 Code Review"

---

# Engineer

Build the chosen solution with clean TypeScript code.

## Your Task

1. Implement following the approved approach

2. Write proper types (no `any`)

3. Handle errors properly

4. Write tests for all code

5. Follow existing code patterns

## Quality Checklist

- [ ] All functions have return types

- [ ] Error handling in place

- [ ] Constants extracted (no magic strings)

- [ ] Tests written and passing

- [ ] Code formatted (prettier)Step 6: Configure the Senior Engineer

Create senior-engineer.agent.md:

---

description: 'Code review for quality and maintainability'

tools: ['vscode', 'read', 'search', 'get_errors']

handoffs:

- agent: engineer

label: "🔄 Request Changes"

- agent: qa-engineer

label: "🧪 Proceed to QA"

---

# Senior Engineer

Review code critically for maintainability.

## Review Focus

1. **Type Safety** - No `any` types

2. **DRY** - No repeated code

3. **Error Handling** - Meaningful error messages

4. **Clean Code** - Clear names, single responsibility

5. **Performance** - No obvious inefficiencies

## Issue Severity

🚨 **Critical**: Blocks PR (security, broken functionality)

⚠️ **Important**: Should fix (DRY violations, poor naming)

💡 **Suggestions**: Nice to have (optimizations)

Approve if only minor suggestions, otherwise request changes.Step 7: Configure the QA Engineer

Create qa-engineer.agent.md:

---

description: 'Verify functionality and test coverage'

tools: ['vscode', 'read', 'run', 'terminal']

handoffs:

- agent: engineer

label: "🔧 Fix Issues"

- agent: final-reviewer

label: "✅ Final Review"

---

# QA Engineer

Verify the code works and tests are comprehensive.

## Your Task

1. Run all tests (unit, integration, e2e)

2. Verify each acceptance criteria is tested

3. Check edge cases are covered

4. Try to break it

## Test Coverage Checks

- Happy path tested

- Error cases tested

- Edge cases tested (nulls, boundaries, invalid inputs)

- Integration points tested

Pass only if all ACs are met and tests are comprehensive.Step 8: Configure the Final Reviewer

Create final-reviewer.agent.md:

---

description: 'Production readiness gate - zero errors allowed'

tools: ['vscode', 'get_errors', 'read']

handoffs:

- agent: engineer

label: "🔧 Fix Errors"

- agent: stage-manager

label: "🚀 Create PR"

---

# Final Reviewer

Final quality gate. Nothing ships with errors.

## Your Task

1. **ALWAYS run `get_errors` tool first**

2. If ANY errors → Block and send back to engineer

3. If no errors → Approve for PR

## Zero Tolerance For

- TypeScript errors

- ESLint violations

- Missing types

- Debug code (console.logs, TODOs)

- Commented-out code

This is the last line of defense. Be strict.Step 9: Using the System

Once configured, here’s how you use it:

Start a ticket:

@stage-manager work on TICKET-123Skip to a specific phase (if needed):

@stage-manager skip to code review for TICKET-123The Stage Manager handles everything else, calling each agent in sequence and managing feedback loops.

Step 10: Customisation Tips

For your codebase:

- Update the Engineer agent with your specific tech stack details

- Add your coding standards to the Senior Engineer review criteria

- Include your testing frameworks in QA Engineer instructions

For your process:

- Add additional checks to Final Reviewer (e.g., security scanning)

- Customise the Solution Architect to your architecture patterns

- Integrate with your specific Jira workflow

What You’ll Notice Immediately

Week 1: The agents feel slow. You could review code faster yourself. Resist the urge to bypass them. (I almost gave up here.)

Week 2: You stop seeing the same issues repeatedly. The AI catches missing types before you do.

Week 3: You realise you’re only reviewing architecture and business logic. The syntax is always clean.

Week 4: A junior developer ships a PR that passes all checks. You review it in 5 minutes instead of 30.

Month 2: You write code again. Actually write code. It feels amazing.

Month 3: You wonder how you ever did code review without this. The thought of going back is exhausting.

The Economics: Why This Makes Business Sense

Let me share my personal math as a lead engineer:

My time before:

- Code review: 15 hours/week

- Catching repeated issues: Exhausting

- Context switching: Constant interruptions

- My hourly rate: Let’s say $100/hour = $1,500/week on code review

My time after:

- First-pass review by AI: ~10 hours saved

- Focus time on architecture: Actual value-add work

- Fewer interruptions: Better flow state

- Value delivered: Strategic work instead of syntax checks

My ROI: I got back 10 hours/week. That’s 40 hours/month to write code, design systems, and solve hard problems instead of pointing out missing semicolons and any types.

For my company:

- GitHub Copilot: ~$80/month for my seat

- Time saved: 40 hours/month of senior engineering time

- ROI: 50x minimum

But the real value isn’t the hours. It’s:

- Getting back to building instead of being a full-time reviewer

- Teaching by example (juniors see patterns from AI feedback)

- Consistency (same standards applied every time)

- Focus (I review architecture, not syntax)

Common Pitfalls to Avoid

1. Skipping steps under pressure

“This is a hot fix, let’s just push it.” Don’t. The system is fastest when you trust it.

2. Not customising agents

Generic agents work, but agents tuned to your codebase work 10x better. Invest the time.

3. Bypassing for “simple” changes

Simple changes cause production bugs too. Run everything through the system.

4. Not iterating on agents

Your first agent prompts won’t be perfect. Refine them based on what issues slip through.

5. Treating it like documentation

This isn’t a process doc humans ignore. It’s an automated system that runs every time. Embrace that difference.

The Future: Where This Goes Next

We’re already experimenting with:

- Automated performance testing – Agent that checks bundle sizes and render times

- Security scanning agent – Reviews for common vulnerabilities before PR

- Documentation agent – Auto-generates API docs and updates README

- Deployment agent – Handles the release process after PR merge

The pattern is clear: Any repetitive process can become an agent.

Getting Started Today

You don’t need to implement all seven agents at once. Start with three:

- Jira Analyst – Catches requirement issues early

- Engineer – Ensures consistent code quality

- Final Reviewer – Blocks obvious errors

Get those working, learn the system, then add the others.

The goal isn’t perfection. It’s consistency. And consistency compounds.

The Bottom Line

I didn’t build this because my team was bad at their jobs. I built it because I was tired of being a human linter.

Great engineers shouldn’t spend their time catching missing types and pointing out DRY violations. That’s what computers are for.

The best use of a lead engineer is architecture, mentorship, and solving hard problems. Not being a syntax checker.

This system gave me back my time and gave me back my job. I’m an engineer again, not just a reviewer.

And if you’re a lead engineer drowning in code reviews, you deserve the same.

Ready to build this? The entire setup takes an afternoon. You’ll feel the difference in a week. And a month from now, you’ll wonder how you ever reviewed code without it.

Questions? Feel free to reach out. I’m happy to help other lead engineers escape code review hell.

Want the agent templates? They’re all in this post. Copy, customise, and get your time back.